Serverless functions means deploying individual functions to a serverless function provider such as AWS Lambda. For this, our technology stack uses: Serverless Framework, Middy, TypeScript, and Webpack, all of these are configured by Serverless Framework’s aws-nodejs-typescript template. This is in contrast to deploying monolithic functions such as NestJS with Express/Fastify and serverless-express, which can still be deployed to AWS Lambda.

A huge problem in serverless technology is not technical but financial: Denial-of-Wallet attacks.

How to Create A TypeScript Serverless Functions Project

Install Serverless Framework CLI:

yarn global add serverlessThe Serverless Functions project can contain multiple functions. For the project itself, Hendy recommends to use this format: {brand}-{noun}. By noun meaning not an active verb, for example, use “time-tracker” instead of “track-time”. Individual functions are usually named using active verbs. And by prefixing by brand name, it makes it easier to locate because we potentially will have hundreds or thousands of AWS Lambda functions.

serverless create -t aws-nodejs-typescript -p talentiva-time-tracker

cd talentiva-time-tracker

yarnThis will create a TypeScript Serverless project with middy middleware framework, API Gateway proxying using REST (not HTTP) API type, Webpack build, and configured on us-east-1 region.

Add more rules using this gitignore.

Note that the default stage in AWS Lambda is “dev”, and not “development”.

Edit serverless.ts in this section to set region, depending on your project requirement:

provider: {

name: 'aws',

region: 'ap-southeast-1',@serverless/typescript vs serverless-plugin-typescript

@serverless/typescript is from Serverless Framework, so we are using this by default.

There serverless-plugin-typescript which is a contributed plugin that is more mature and works well with serverless-offline.

useDotenv: true

To make local testing more convenient, you will want to set useDotenv: true in serverless.ts:

useDotenv: true,Test A Function Locally

# Invoke local using mock data file (recommended)

yarn sls invoke local -f hello -p src/functions/hello/mock.json

# Invoke local using literal

yarn sls invoke local -f hello -d '{"body": {"name": "Hendy"}}'Sample response:

{

"statusCode": 200,

"body": "{\"message\":\"Hello Hendy, welcome to the exciting Serverless world!\",\"event\":{\"body\":{\"name\":\"Hendy\"}}}"

}Creating A New Function

You can either modify the code in src/functions/hello/handler.ts, or create your own handler. Here are the steps:

- Create a folder inside

src/functions, Hendy recommends the naming to start with an active verb, and camelCase, e.g.reviewTimeLogs. - Inside that folder you have 4 files:

handler.ts. The primary code.index.ts. The mapping for Lambda trigger.mock.json. Sample mock data for testing (warning: don’t put secrets here!).schema.ts. JSON Schema for the input data. It is recommended so that your handler.ts can get type checking in VS Code. This is optional if you’re in a hurry, but then in handler.ts, use “any” instead of “typeof schema”.

- In

serverless.ts, import your function and add it toserverlessConfiguration.functionssection. - In

src/index.ts, export your function, for example: (Hendy thinks this is redundant)export { default as reviewTimeLogs } from './reviewTimeLogs';

handler.ts

import 'source-map-support/register';

import type { ValidatedEventAPIGatewayProxyEvent } from '@libs/apiGateway';

import { formatJSONResponse } from '@libs/apiGateway';

import { middyfy } from '@libs/lambda';

import schema from './schema';

const hello: ValidatedEventAPIGatewayProxyEvent<typeof schema> = async (event) => {

return formatJSONResponse({

message: `Hello ${event.body.name}, welcome to the exciting Serverless world!`,

event,

});

}

export const main = middyfy(hello);index.ts

import schema from './schema';

import { handlerPath } from '@libs/handlerResolver';

export default {

handler: `${handlerPath(__dirname)}/handler.main`,

events: [

{

http: {

method: 'post',

path: 'hello',

request: {

schema: {

'application/json': schema

}

}

}

}

]

}mock.json

{

"headers": {

"Content-Type": "application/json"

},

"body": "{\"name\": \"Frederic\"}"

}schema.ts

export default {

type: "object",

properties: {

name: { type: 'string' }

},

required: ['name']

} as const;Watch & Redeploy Serverless Functions Automatically

When you want to do rapid development, Hendy recommends using “serverless deploy”-ing specific functions with sls-dev-tools, and not “running the server locally”. There are several many reasons for this:

- development is going cloud based (Gitpod, AWS Cloud9, Realm, etc.). See Improving the serverless developer experience with sls-dev-tools | AWS Blog.

- replicating development environment to local takes too much time, effort, and bandwidth (developer’s slow Internet) compared to cloud environments

- several (micro)services that need to be running/dependencies, and configuring/running all of them slows development

- services depend to a database, like MongoDB, and setting it up and loading data is slowing development. The alternative is using a remote MongoDB from local app instance, but then it will be slow.

- even after all of that has been configured, you’ll still need to configure .env. By using a remote serverless development environment instead of local one, app configuration can be set in the remote instance.

- requires beefy developer machines, otherwise it will slow development and make developer becomes extremely unproductive

- CI tools work best when it is running alongside the developer at all times, not “separated”

- when working in the same (development) environment, it’s actually desirable to let other developer’s changes affect our work “in real time”, including breaking changes. But most desirable ones are bug fixes and improvements/features can take effect immediately.

- no need to be confused with “it works on my machine” problem, since the code always runs on a remote cloud environment with dev-prod parity (12 factor)

- some services like MongoDB Realm, AWS SQS, Twilio, etc., just can’t run locally anyway

The cons: Having a remote development environment does cost money. However, this should be offset by more developer productivity -> faster feature-to-market!

Do an initial deploy of your project:

serverless deploy -s devInstall sls-dev-tools in your project:

yarn add --dev sls-dev-toolsNow run sls-dev-tools:

yarn sls-dev-toolsNow, every time you change a function, you can choose the lambda function then press “d” to deploy only that function. And that will be pretty fast, especially if you use a cloud IDE like Gitpod. That’s why using a cloud IDE is recommended!

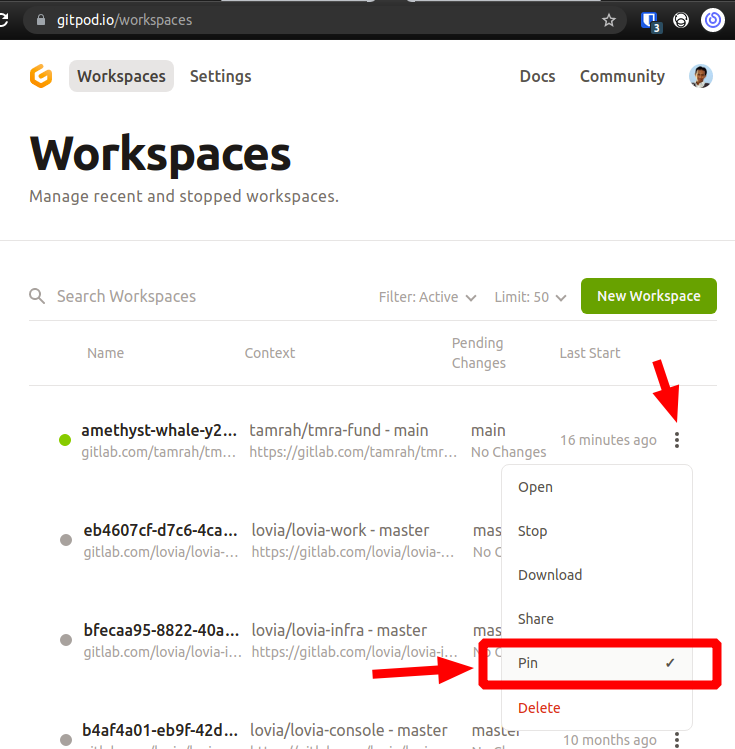

Tip: Pin Your Gitpod Workspace

Pin your workspace on Gitpod so you won’t need to reconfigure your workspace again. Go to https://gitpod.io/workspaces then Pin:

Running “Middy Server” Locally/Offline (Not Recommended)

Hendy’s Warning: Please don’t use this. But in case you want it, you can use serverless-offline.

Deploy All Functions

# Default is dev stage

serverless deploy -s dev

# production stage

serverless deploy -s productionTail logs in production

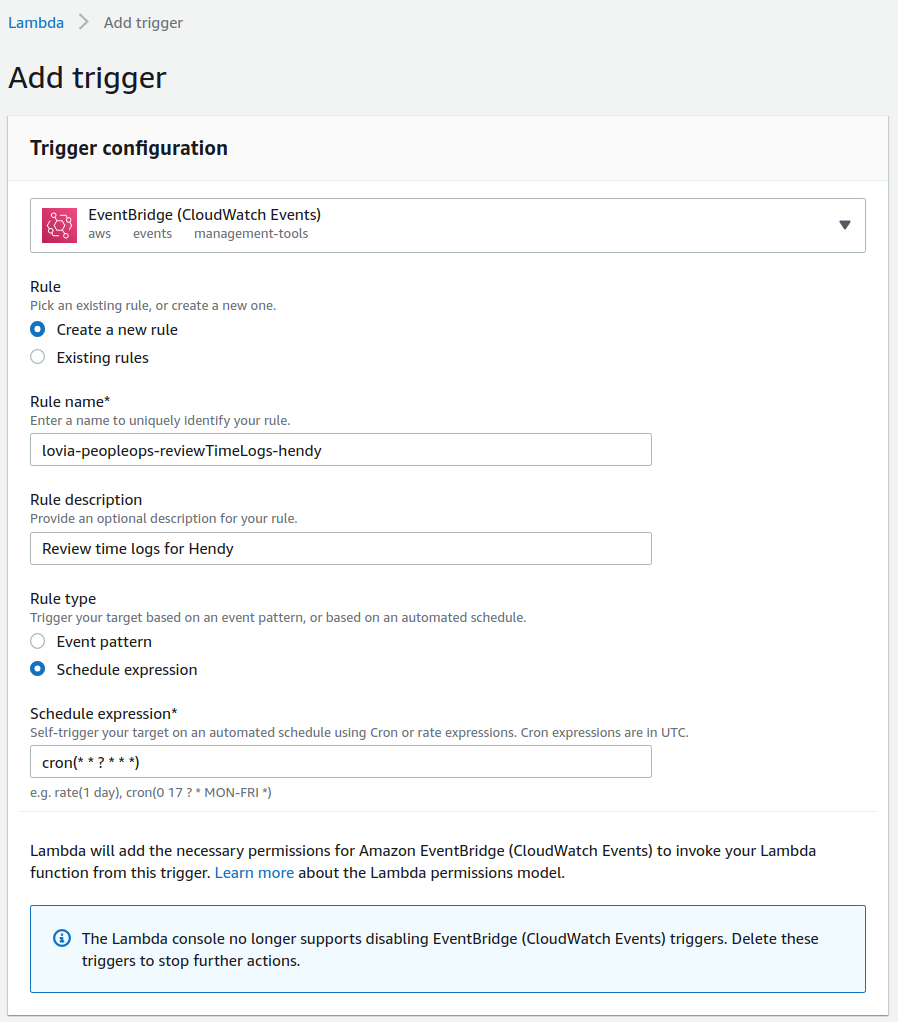

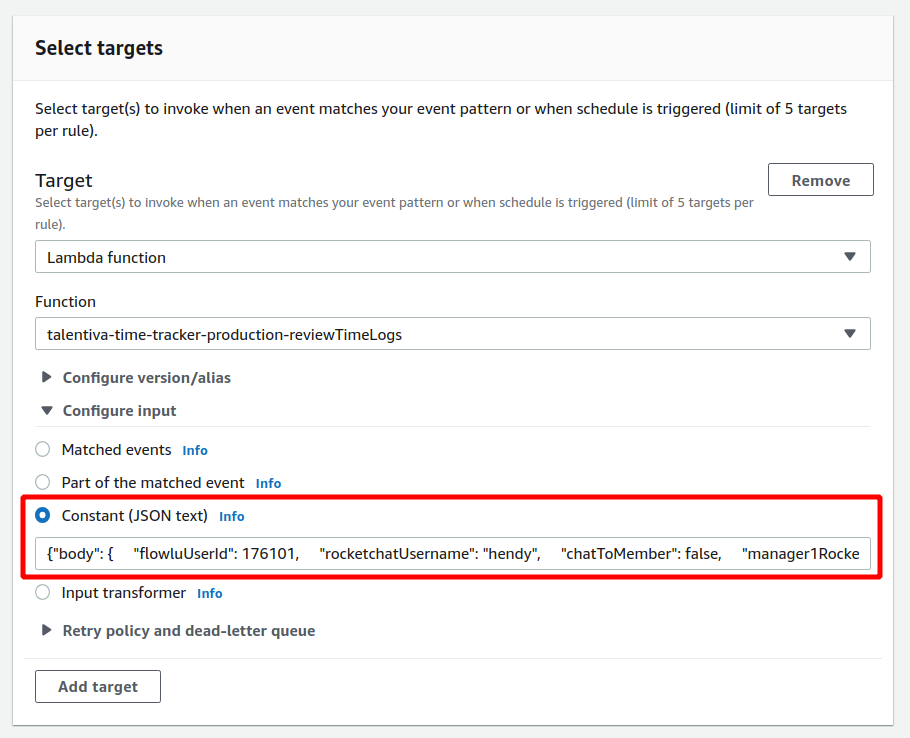

yarn sls logs -s production -f reviewTimeLogs -tScheduling A Function using AWS EventBridge

Although it’s possible to schedule function using Serverless Framework, if you have complex/dynamic/multiple schedules, it’s better to use EventBridge either manually or via Pulumi IaaS.

For AWS EventBridge’s cron expression, an example if you want to schedule every working day at 17:30 WIB:

30 10 ? * MON-FRI *

Make sure to set Target -> Configure input, to the input needed by your serverless function. Don’t forget to wrap it with “body” field.

Get Deployment Configuration Information

yarn sls info -s dev

yarn sls info -s productionUsing Serverless Framework in A Monorepo with Lerna

Reference: https://serverless-stack.com/chapters/using-lerna-and-yarn-workspaces-with-serverless.html

Handling Exceptions as HTTP Errors

yarn add http-errors @middy/http-error-handlerReference: https://www.npmjs.com/package/@middy/http-error-handler

Uploading Multipart Form Data (NOT Binary Files)

Note: For binary files, either:

- use JSON and put base64 inside

- do not use Lambda, then use a different service like S3 to handle the file upload directly from client

- do not use Lambda, then replace this function with a NestJS container based service

yarn add @middy/http-multipart-body-parserReference: https://github.com/middyjs/middy/tree/main/packages/http-multipart-body-parser