Rocket.Chat (upstream) is the basis of our Soluvas Chat product.

Resources

- GitHub

- Docker image: rocketchat/rocket.chat

Deployment Architecture

Our Rocket.chat servers as of 2021-01 are deployed on:

- AWS, Singapore region:

- [chat.lovia.life] Fargate Singapore, using Fargate Spot instance

- [chat.soluvas.com] Lightsail 4 GB

- Application Load Balancer

Fronted by CloudFront(disabled to reduce issues and increase stability during active development)- [chat.lovia.life] File upload storage in AWS S3 + CloudFront

- CDN may be migrated from CloudFront to BunnyCDN

- [chat.soluvas.com] File upload storage local filesystem

- should be using AWS S3

- should be frontend either CloudFront or BunnyCDN

- MongoDB Atlas, AWS Singapore region (lovia-sg cluster)

- Push:

- [chat.lovia.life] Preconfigured Push Notification (for now, 1000 messages/month soft cap). Will be migrated to own FCM.

- [chat.soluvas.com] Soluvas’s own FCM

Our Rocket.chat 2020-09 is deployed on:

- AWS, Singapore region:

- Fargate Singapore, using Fargate Spot instance

- Fronted by CloudFront, will be migrated from CloudFront to BunnyCDN

- File upload storage in AWS S3 + CloudFront, CDN will be migrated from CloudFront to BunnyCDN

- MongoDB Atlas, AWS Singapore region (lovia-sg cluster)

- Preconfigured Push Notification (for now, 1000 messages/month soft cap). Will be migrated to own FCM.

Our Rocket.chat 2020-07 was deployed on:

- DigitalOcean, Singapore region:

- Kubernetes cluster k8s-lovia-sg ($10++/mo)

- File upload storage in AWS S3 + CloudFront

- CloudFront for Kubernetes cluster

- MongoDB Atlas, AWS Singapore region (lovia-sg cluster)

- Preconfigured Push Notification (for now, 1000 messages/month soft cap)

Preparing MongoDB

Rocket.Chat requires a replica set, and both its own rocketchat database, in addition to the oplog (“local”) database. See https://go.rocket.chat/i/oplog-required.

Required users:

oploguser, with role read for local database, and clusterMonitor on admin database- <regular user>, with role readWrite for specific Rocket.Chat database, and clusterMonitor on admin database

MongoDB requires change stream privileges, otherwise you’ll get (in stdout/ECS logs during startup):

Change Stream is available for your installation, give admin permissions to your database user to use this improved version.

Reference: Install Rocket.Chat with Docker. See also issue #20017, issue #20027).

Note: Some people use USE_NATIVE_OPLOG=true and seems to make it better, but I’m not sure what setting is recommended.

Fargate Spot Deployment

Summary:

- Create MongoDB database credentials, with

dbAdmin+readWriteaccess to Rocket.Chat database (e.g.lovia_chat_prd,soluvas_chat_prod) andreadaccess to oplog “local” database. - In ECS, create Task Definition, with one container named rocketchat. In rocketchat container, set these environment variables: MONGO_OPLOG_URL, MONGO_OPTIONS={“ssl”: true}, MONGO_URL, and ROOT_URL (without trailing slash). Task resource limits for initial setup wizard: 0.5 vCPU dan 2 GB RAM (minimum). After stable, sometimes it is possible to reduce to 1 GB RAM however it may also crash immediately. So it’s better to use 2 GB RAM.

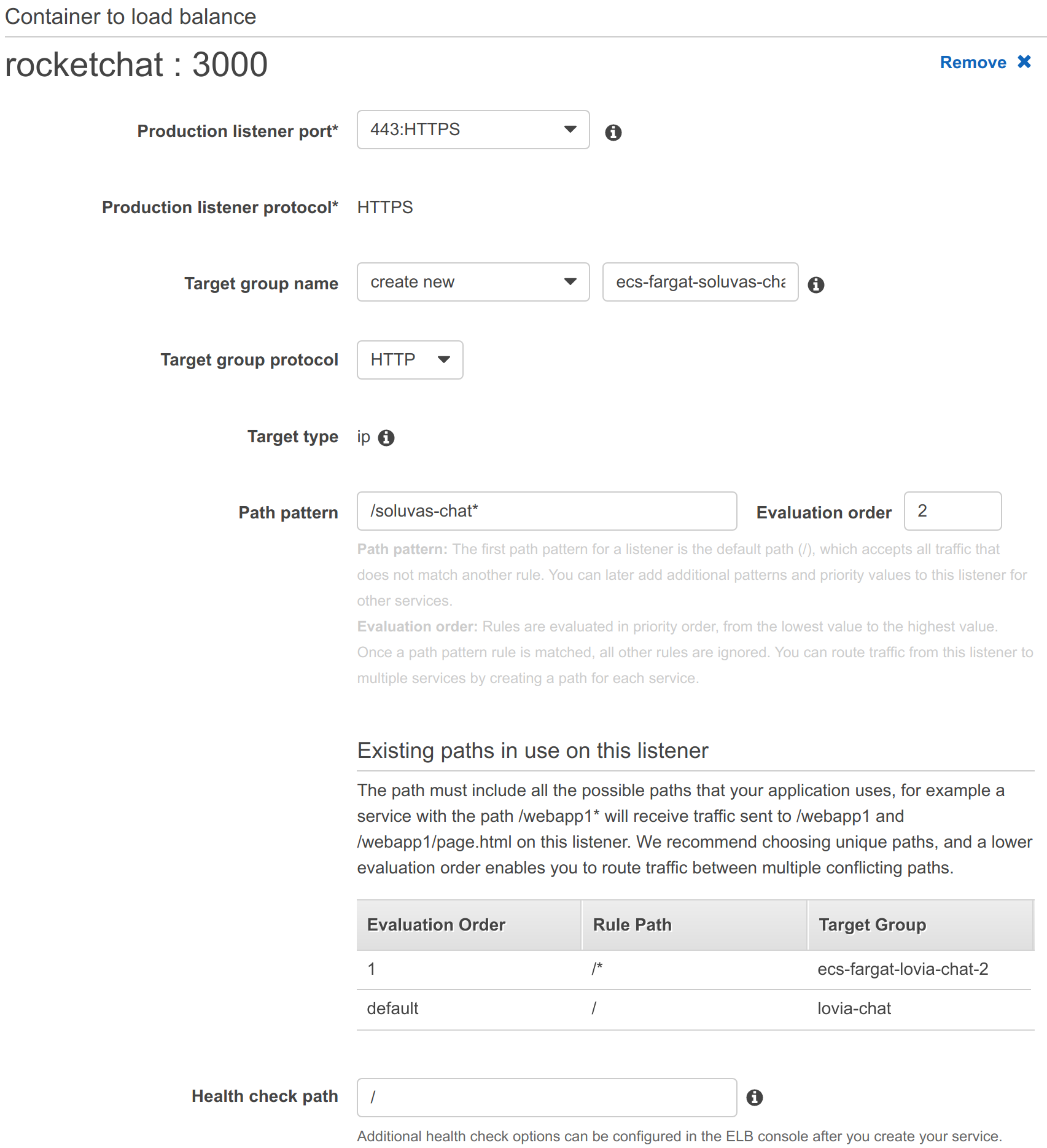

- Launch ECS Service using Fargate Spot cluster. AZ is ap-southeast-1b (Hendy can’t remember exactly, but probably because previously, Spot pricing is lower than 1a, and Graviton2 m6g availability). Security group is rocketchat-3000 (needs to be accessible by Application Load Balancer). When setting up Fargate-ALB configuration, path pattern needs to be set to unique. However, later, you will need to manually change the ALB rule to use Host criteria. Make sure to set Health check path to

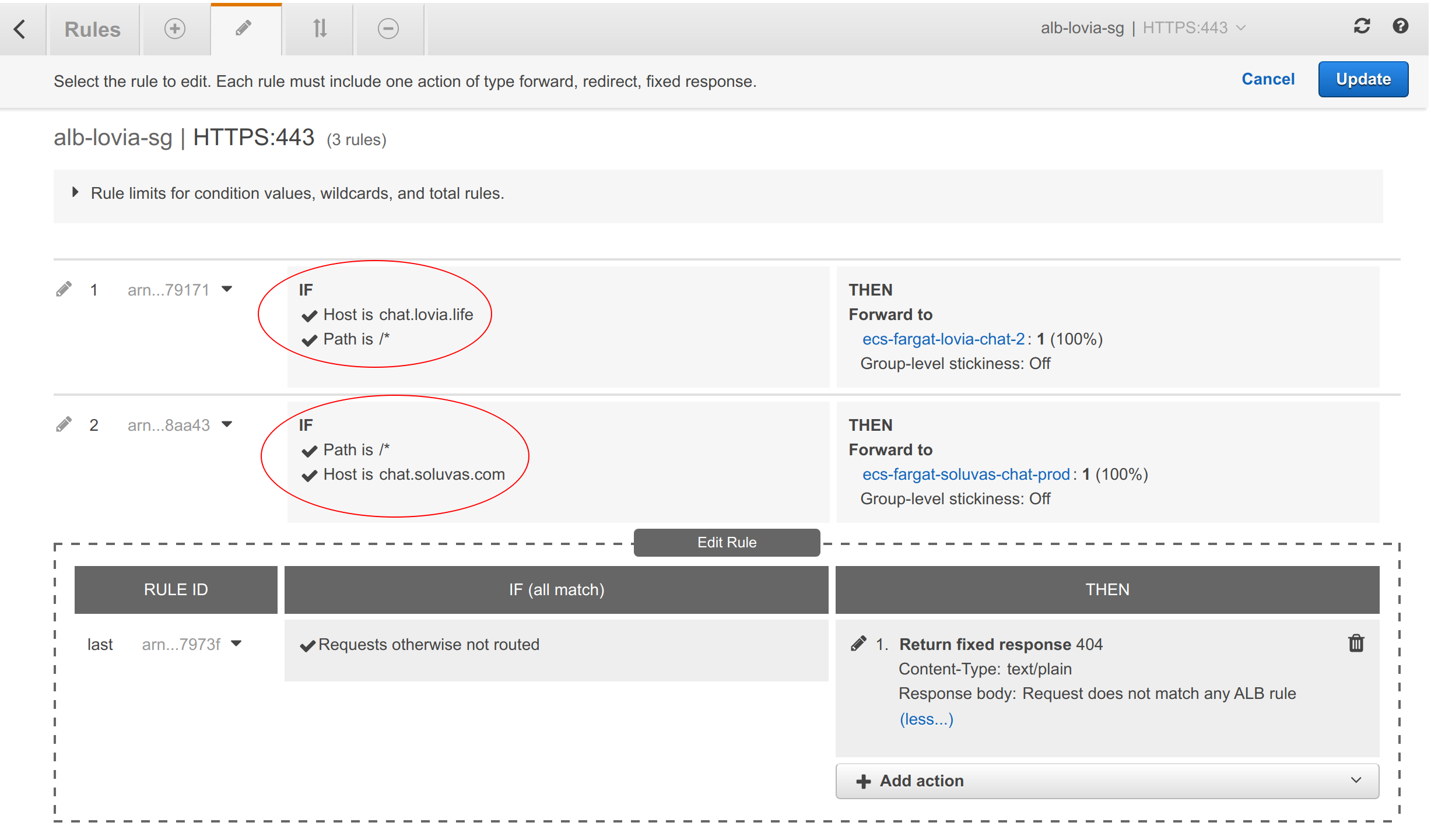

/api/info. - Update Application Load Balancer rule criteria to use Host pattern

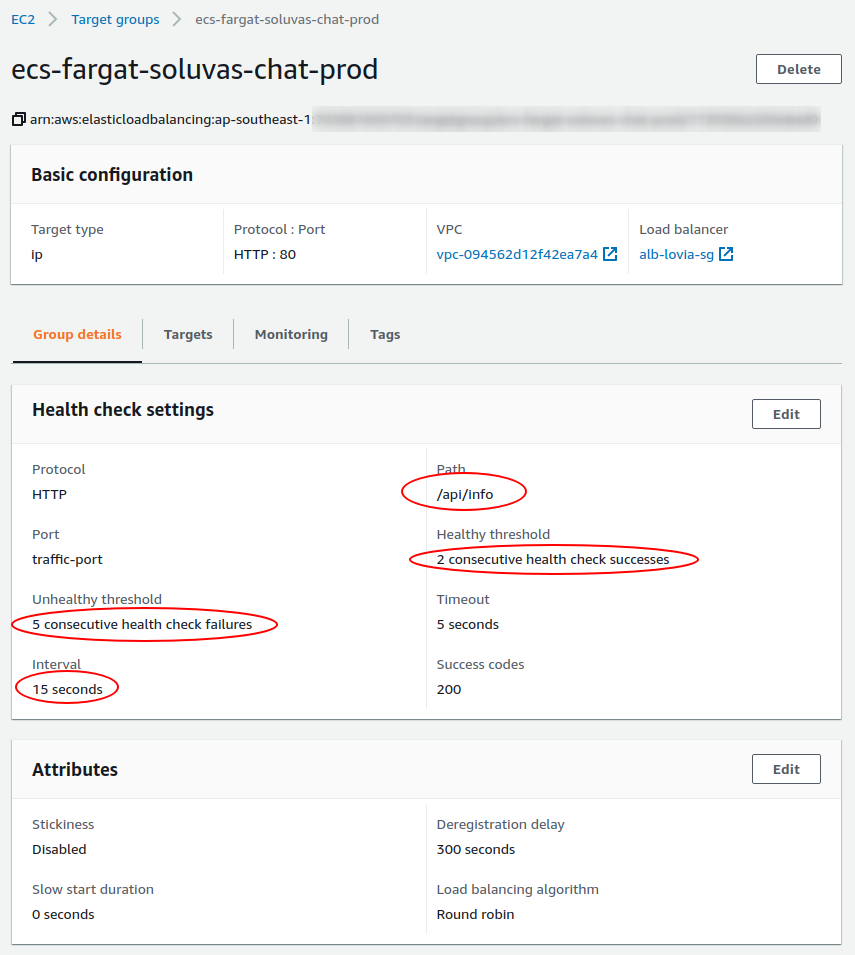

- Configure Target group health check to use 2 successes threshold, 5 errors threshold, and interval 15 seconds.

- Configure DNS: Add CNAME record to Load Balancer

- Do Administration Setup below, for AWS S3 File Uploads, AWS SES, OpenID Connect, etc.

Initial Fargate – ALB configuration. After this, you must change the ALB configuration to use Host criteria and path /*.

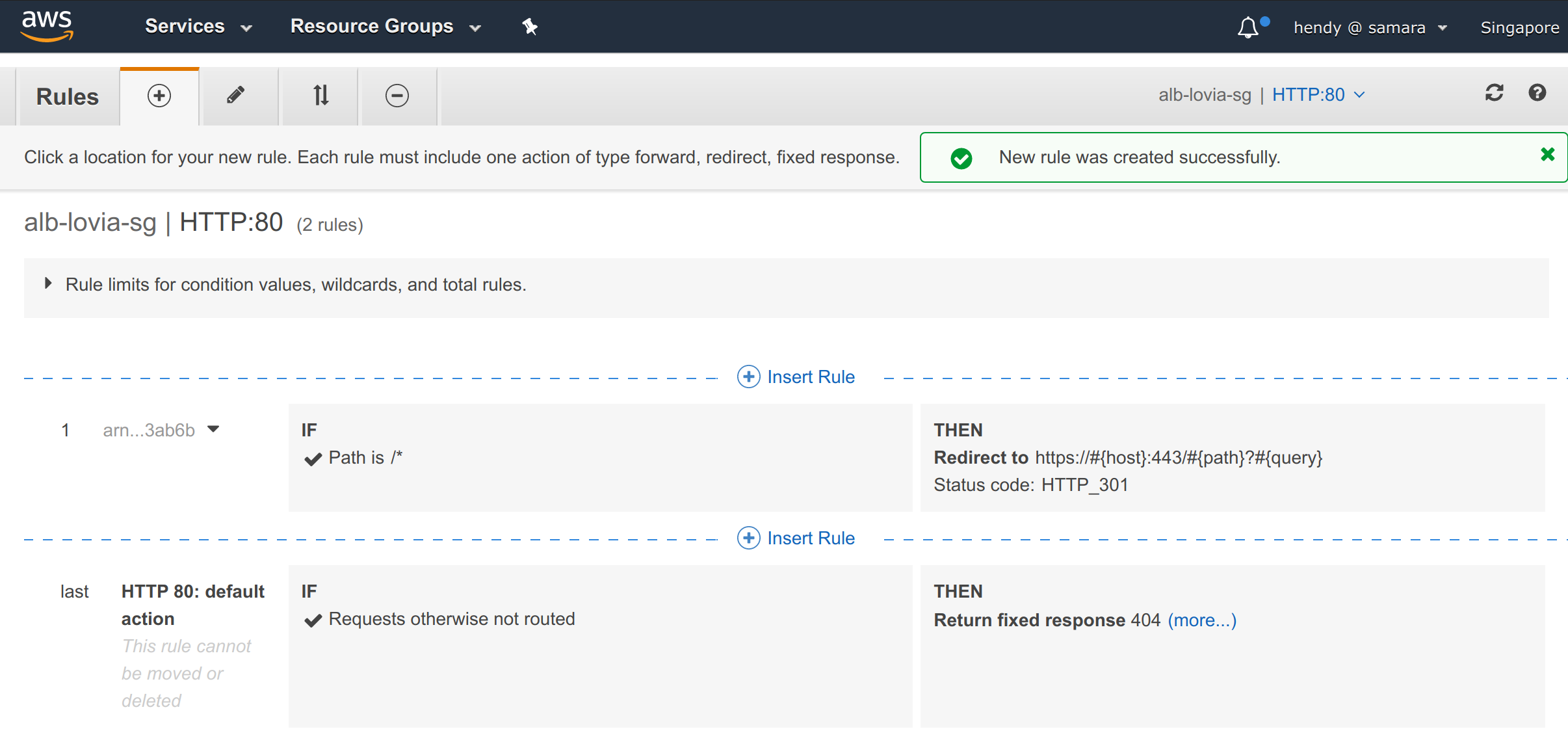

ALB rules for HTTP port 80: Redirect to HTTPS

ALB rules for HTTPS port 443

Target group’s health check path must be /api/info

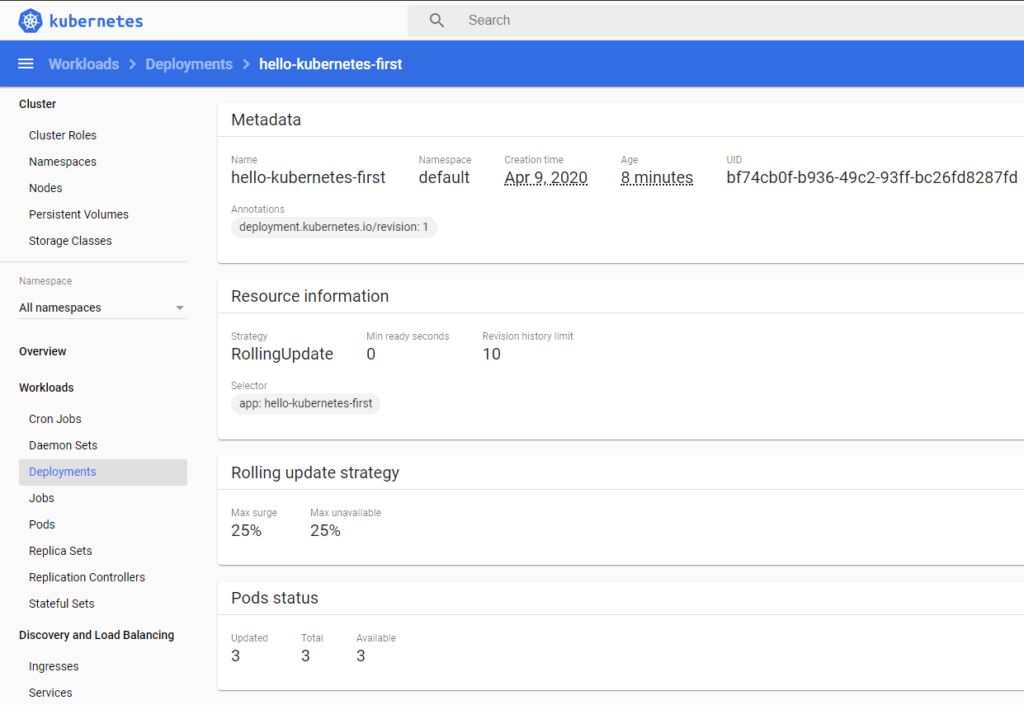

Kubernetes Deployment (Legacy)

We don’t use the Snap installation because we want to have flexibility to customize + deploy bleeding edge code soon. We use Rocket.Chat helm chart [source] to setup the Kubernetes. (Tip: As alternative, Docker Compose installation is available. Kompose may be used to convert docker-compose.yml to Kubernetes easily, but after that’s done we prefer doing it the hard way🤘)

⚠ Warning: There are two “official” Rocket.Chat Docker images, rocketchat/rocket.chat is what we use, and not _/rocket.chat.

Repository: lovia/lovia-devops

Tip: To learn about Ingress & TLS LetsEncrypt in Kubernetes DigitalOcean, see:

- How to Install Software on Kubernetes Clusters with the Helm 3 Package Manager

- How To Set Up an Nginx Ingress on DigitalOcean Kubernetes Using Helm

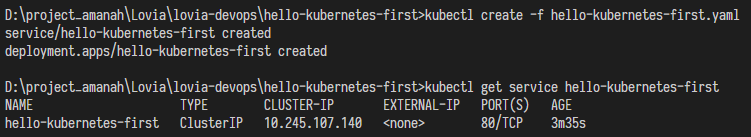

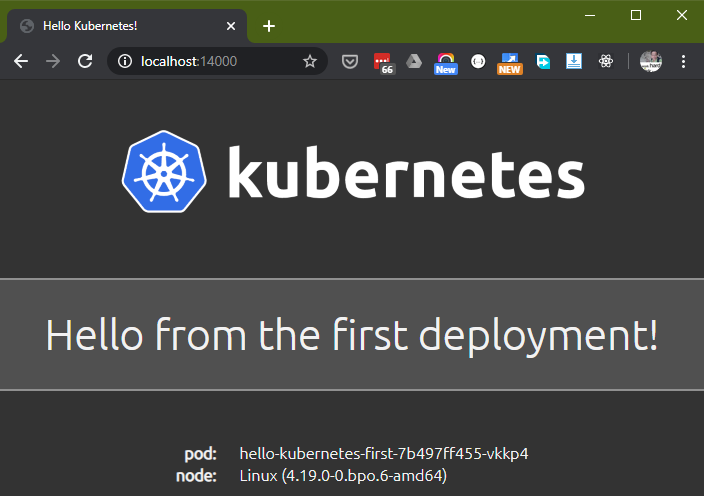

D:\project_amanah\Lovia\lovia-devops\hello-kubernetes-first>kubectl port-forward service/hello-kubernetes-first 14000:80

Forwarding from 127.0.0.1:14000 -> 8080

Forwarding from [::1]:14000 -> 8080

Important: You’ll need to setup Nginx Ingress Controller first (perhaps with kind: DaemonSet).

Deploy rocketchat-ingress.yaml:

apiVersion: networking.k8s.io/v1beta1

kind: Ingress

metadata:

name: lovia-chat-ingress

annotations:

kubernetes.io/ingress.class: nginx

# https://github.com/nginxinc/kubernetes-ingress/issues/21#issuecomment-521338887

nginx.ingress.kubernetes.io/proxy-body-size: 64m

# https://pumpingco.de/blog/using-signalr-in-kubernetes-behind-nginx-ingress/

nginx.ingress.kubernetes.io/affinity: cookie

cert-manager.io/cluster-issuer: letsencrypt-prod

spec:

# REQUIRES helm cert-manager

tls:

- hosts:

- chat.lovia.life

secretName: lovia-chat-tls

rules:

- host: chat.lovia.life

http:

paths:

- backend:

serviceName: lovia-chat-rocketchat

servicePort: 80

Get the application URL by running these commands:

export HTTP_NODE_PORT=$(kubectl --namespace default get services -o jsonpath="{.spec.ports[0].nodePort}" nginx-ingress-controller)

export HTTPS_NODE_PORT=$(kubectl --namespace default get services -o jsonpath="{.spec.ports[1].nodePort}" nginx-ingress-controller)

export NODE_IP=$(kubectl --namespace default get nodes -o jsonpath="{.items[0].status.addresses[1].address}")

echo "Visit http://$NODE_IP:$HTTP_NODE_PORT to access your application via HTTP."

echo "Visit https://$NODE_IP:$HTTPS_NODE_PORT to access your application via HTTPS."

e.g. https://hw1.lovia.life:32020/

Prepare values.yaml first.

Install Rocket.Chat using Helm chart:

helm repo add stable https://kubernetes-charts.storage.googleapis.com

helm install lovia-chat -f values.yaml stable/rocketchat

Administration

File Upload: AWS S3

SAML: Google Cloud Identity Platform

🔗 SAML Authentication in Rocket.Chat

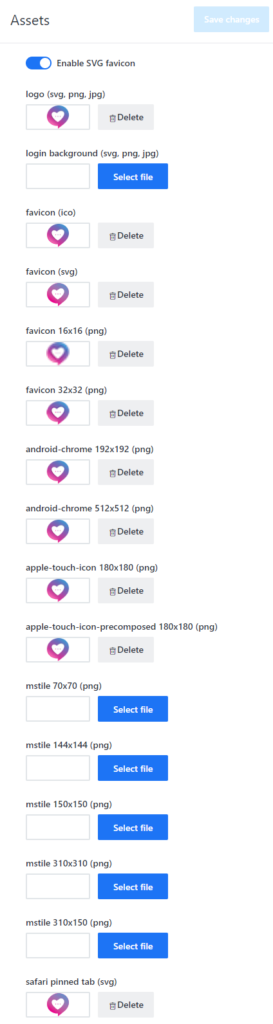

Assets

Make sure icons and Safari pinned tab are set.

OpenID Connect (SSO) with FusionAuth

- Admin > OAuth > Setup FusionAuth OpenID Connect as Custom OAuth Provider.

- URL: https://login.lovia.life

- Token Path: /oauth2/token

- Token Set Via: Header

- Identity Token Sent Via: Same as “Token Sent Via”

- Identity Path: /oauth2/userinfo

- Authorize Path: /oauth2/authorize

- Scope: offline_access

- Param Name for access token: access_token

- Id/Secret: ***

- Login Style: Redirect

- Button Text: Sign In

- Username field: preferred_username

- Name field: name

- Avatar field: picture

- Roles/Groups field name: roles

- Merge Roles from SSO: checked

- Show Button on Login Page: checked

- Admin > Accounts:

- Disable default login form.

- Disable: Allow User Avatar change.

- Disable: Allow Name change.

- Disable: Allow Username change.

- Disable: Allow Email change.

- Disable: Allow Password change.

- [Optional] Disable: Allow User Profile Change.

- Registration > Registration Form: Disabled.

The roles are only synced on first login, and not being refreshed on each login. Please see the bug report for current state.

Troubleshooting

{"line":"392","file":"oauth_server.js","message":"Error in OAuth Server: Failed to fetch identity from fusionauth at https://login.lovia.life/oauth2/userinfo. failed [401]","time":{"$date":1587229101993},"level":"warn"}

Exception while invoking method 'login' Error: Failed to fetch identity from fusionauth at https://login.lovia.life/oauth2/userinfo. failed [401]

at CustomOAuth.getIdentity (app/custom-oauth/server/custom_oauth_server.js:282:18)

at Object.handleOauthRequest (app/custom-oauth/server/custom_oauth_server.js:291:26)

at OAuth._requestHandlers.<computed> (packages/oauth2/oauth2_server.js:10:33)

at middleware (packages/oauth/oauth_server.js:161:5)

at /app/bundle/programs/server/npm/node_modules/meteor/promise/node_modules/meteor-promise/fiber_pool.js:43:40

Cause/Solution: Whitelist headers in CloudFront.

Admin > Email > SMTP.

- Protocol: smtp

- Host: Southeast Asia:

email-smtp.ap-southeast-1.amazonaws.com/ N. Virginia:email-smtp.us-east-1.amazonaws.com - Port: 587

- IgnoreTLS: unchecked

- Pool: unchecked

- Username: SES SMTP username (not IAM access key ID)

- Password: SES SMTP password(not IAM secret access key)

- From Email: with full name e.g.

Soluvas <[email protected]>

Make sure you verify this in AWS SES domains, in appropriate AWS region

CloudFront

Discussion: We probably should disable CloudFront on the main app server to minimize issues with caching behavior, SSL, etc. We can enable CloudFront again after we’re more confident with internal behavior of Rocket.Chat Server, Rocket.Chat React Native, and nginx-ingress. Currently we set CloudFront’s Default TTL to 0 but if problem persists then probably we can disable CloudFront entirely.

chat.lovia.life is fronted by CloudFront CDN d363egvcze92jw.cloudfront.net. These REST API headers must be whitelisted in addition to the usual headers:

- X-User-Id

- X-Auth-Token

File Upload: Switch from GridFS to AWS S3

It’s not entirely clear why GridFS is recommended by Rocket.Chat. But logically it increases overhead as it requires the app server to be a “reverse proxy” for GridFS. So we think AWS S3 is best suited for Rocket.Chat File Upload storage.

Quick steps:

- Create media-chat.lovia.life bucket in ap-southeast-1 region

- Unblock public access

- Set bucket CORS configuration:

<?xml version="1.0" encoding="UTF-8"?>

<CORSConfiguration xmlns="http://s3.amazonaws.com/doc/2006-03-01/">

<CORSRule>

<AllowedOrigin>https://chat.lovia.life</AllowedOrigin>

<AllowedMethod>PUT</AllowedMethod>

<AllowedMethod>POST</AllowedMethod>

<AllowedMethod>GET</AllowedMethod>

<AllowedMethod>HEAD</AllowedMethod>

<MaxAgeSeconds>3000</MaxAgeSeconds>

<AllowedHeader>*</AllowedHeader>

</CORSRule>

</CORSConfiguration>

- Create IAM user

lovia-rocketchatwith limited inline policy:

{

"Version": "2012-10-17",

"Statement": [

{

"Sid": "ListObjectsInBucket",

"Effect": "Allow",

"Action": [

"s3:ListBucket"

],

"Resource": [

"arn:aws:s3:::media-chat.lovia.life"

]

},

{

"Sid": "AllObjectActions",

"Effect": "Allow",

"Action": [

"s3:*Object",

"s3:*ObjectAcl"

],

"Resource": [

"arn:aws:s3:::media-chat.lovia.life/*"

]

}

]

}

- Create CloudFront distribution for

media-chat.lovia.life - Add the CloudFront distribution CNAME (not proxied) to CloudFlare DNS

- Use Alternate CNAME media-chat.lovia.life

Rocket. File Upload Configuration:

Bucket name: media-chat.lovia.life

Acl: (leave blank)

CDN Domain for Downloads: media-chat.lovia.life

Region: ap-southeast-1

CSS & Fonts

As recommended by our logo designer Dany Nofiyanto, we use Baloo 2 for headings and Montserrat / Open Sans for body text.

Administration > Layout:

CSS:

@import url('https://fonts.googleapis.com/css2?family=Open+Sans:ital,wght@0,400;0,700;1,400;1,700&display=swap');

@import url('https://fonts.googleapis.com/css2?family=Montserrat:ital,wght@0,400;0,700;1,400;1,700&display=swap');

Font > Body text: 'Open Sans', sans-serif

Font > Body text: 'Montserrat', sans-serif

Bugsnag

Bugsnag is not required for server, but helpful. Bugsnag is required for Rocket.Chat mobile app development & packaging.

Administration > General > Bugsnag : Set API Key

Google Summer of Code

Rocket.Chat has been a Google Summer of Code Organization in 2020, and hopefully in upcoming years. It’s good to help this effort by becoming mentors and/or students.